Architecture Repository Import

Dragon1 provides three applications for importing data:

- The Architecture Repository (Quick CSV Import Only feature) will be explained on this page.

- The Import Application

- The Visual Designer

How to Import a CSV File

CSV means Comma-Separated File.

There are two variants of CSV. One with commas and one with a semicolon as a separator. Dragon1 supports working with both.

Below are some examples of CSV files you can import.

If you have an Excel Sheet, save it as a Comma-Separated File (CSV). Next, you can import that file into the Architecture Repository.

NOTE: If your original data has fields with commas in the text, the text on export to CSV file will be placed in double quotes like this: one, two, "three, four", five, six.

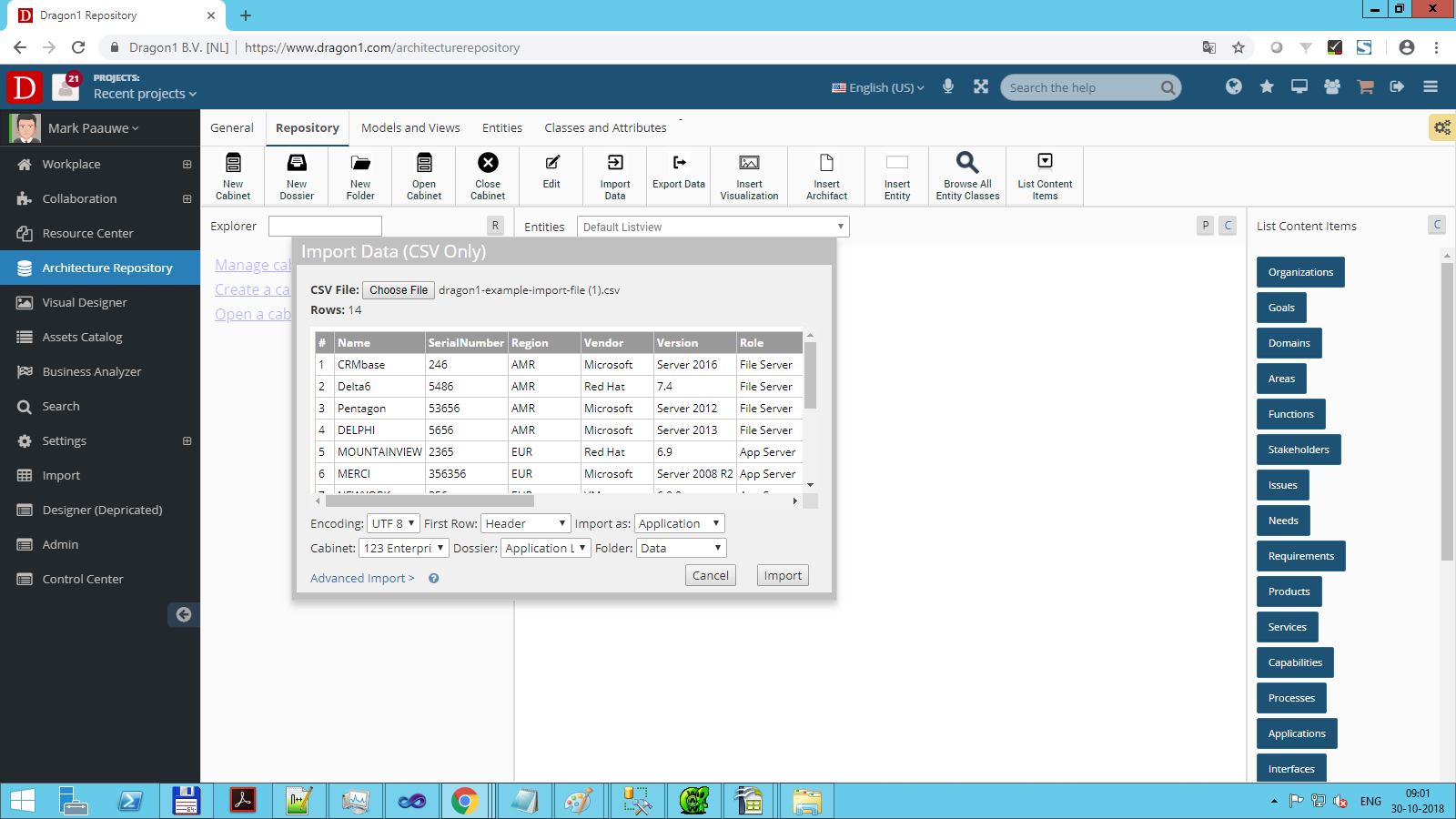

To import a CSV file in the Architecture Repository:

- Login via www.dragon1.com/login

- Go to the Architecture Repository via www.dragon1.com/architecturerepository

- Click on Choose File (depending on your Browser language settings, the button will have a different name)

- Select a CSV file

- Check in the preview pane if Dragon1 recognizes your data correctly.

- Select the Entity class. (Check carefully not to select the wrong class to map the imported data.)

- Select a Cabinet, Dossier, and Folder

- Click on Import to import the data

- The import data is tagged as an import with a unique code (like import001). This makes it easier to bulk delete the imported data as a set.

Required Configuration of the Import Data File

To have a smooth import of your data, you need to make sure that your import data has a certain data structure, such as an Excel or CSV File (NOTE: an Excel Sheet must be first saved as CSV before you can import it here).

A CSV File for import must always contain column headers.

The required data structure for your data file is:

- There is a column called uniqueid

- There is a column called name

- There is a column called class

- There is a column called action (containing the value Insert, Update or Delete)

- If the CSV file does not have an action column and action values, Dragon1 will not know if you want to insert, update, or delete the data

- Do NOT use a column called ref or id

- Make sure that you use , (comma) as the field separator

- Avoid using double or single quotes in your CSV file data. This ensures the perfect import of your data

- Optional column: type

- Optional column: lifecycle

- Optional column: descr

- Optional column: bitmapimage

- Optional column: title

- Optional column: owner

- Optional column: startdate

- Optional column: enddate

- Optional column: category (you need to make sure the category you use here is in the category entity list in the repository)

- Optional column: tags

- Optional column: weight (this is an integer number between -100000 and 100000. It determines the order of the items in a list)

- Optional column: documentlink

- All other columns of data you provide are treated as User Defined Data (UDF) and stored in the data field of an entity

Lifecycle states are: 0 - Any, 1 - Plan, 2 - Phase In, 3 - Active, 4 - Phase Out, 5 - End of life. Preferably, you provide the ID value. But you can also provide the text value. The import mechanism will convert the text value to the id value.

EXAMPLE CSV FILE FOR APPLICATIONS

EXAMPLE CSV FILE FOR PROCESSES

EXAMPLE CSV FILE FOR SERVERS INSERT

EXAMPLE CSV FILE FOR SERVERS UPDATE

EXAMPLE CSV FILE FOR SERVERS DELETE

Download the sheets, edit them to your liking, and use them to upload your data.

If your data has user-defined attributes, you can use whatever name for the column, but do not use single or double quotes, spaces, or hyphens in the name. Column names with only alphanumeric characters (a-z and 0-9) work best.

The uniqueid will be stored in the reference field on Dragon1. With this, we can detect updates to your data in later imports.

You can use different classes in a file (processes, applications, ...), but we advise creating and importing a data file per class.

The names for these columns may be in uppercase and lowercase.

If you follow this rule, then on importing the data, Dragon1 will put the name in the name field, description in the description field, and unique in the ref id field.

The import mechanism will recognize, using the ref id field, whether or not you already imported a version of this data. If so, you need to approve that the data of existing rows be updated and that the new rows be inserted.

User Defined Fields (Max 100 per Row)

When you import data, you will often have your unique fields (attributes). Dragon1 will import up to 100 fields per row.

In the Architecture Repository, you can edit these fields' labels/names and values.

In the Visual Designer and the Viewer, you can use these attributes and values to generate specific views for your data.

CSV File Structure

The import mechanism in the architecture repository for CSV is hardcoded for the comma as a field separator. The Import Application supports other field separators for CSV files.

Compliant with the CSV standard, fields that contain commas and linefeeds or carriage returns (LF or CR) need to be enclosed in double quotes. A double quote inside a field must be escaped (preceded by a double quote). Dragon1 can deal with this part of the specification. Normally, exporting a CSV file from professional software creates a correct CSV file, and any compliant CSV file can be imported.

Besides the CSV file standard, you can add a comment field with three double hashtags, like ###. If such a line is present in the file, Dragon1 will ignore these lines on import.

Updating Imported Data

As discussed in the paragraphs before, Dragon1 recognizes if data is already imported.

If you want to update imported data, you can import the data and answer yes to the question if the data should be updated.

NOTE: Updating imported data cannot be reversed.

Deleting Imported Data

You can delete imported data per entity or batch in the Architecture Repository. NOTE: Deleting imported data is irreversible. Only the user who imported the data can delete this data.

Check Imported Data With a Report

If you import data, you may want to check the imported data for correctness. For that, we provide basic reports on imports in the Architecture Repository and Business Analyzer.

The activities of importing, updating, and deleting data are logged and available as reports in the Business Analyzer.

Here you can read the official RFC 4180 specification